Hey, I’m Anej.1

I’m a fourth-year PhD fellow at the ETH AI Center, working on the theoretical foundations of language models to understand what they can (and can’t) do—and using that understanding to design better and more efficient architectures.

I’m co-advised by Prof. Ryan Cotterell and Prof. Valentina Boeva. I have also spent a chunk of my PhD at the

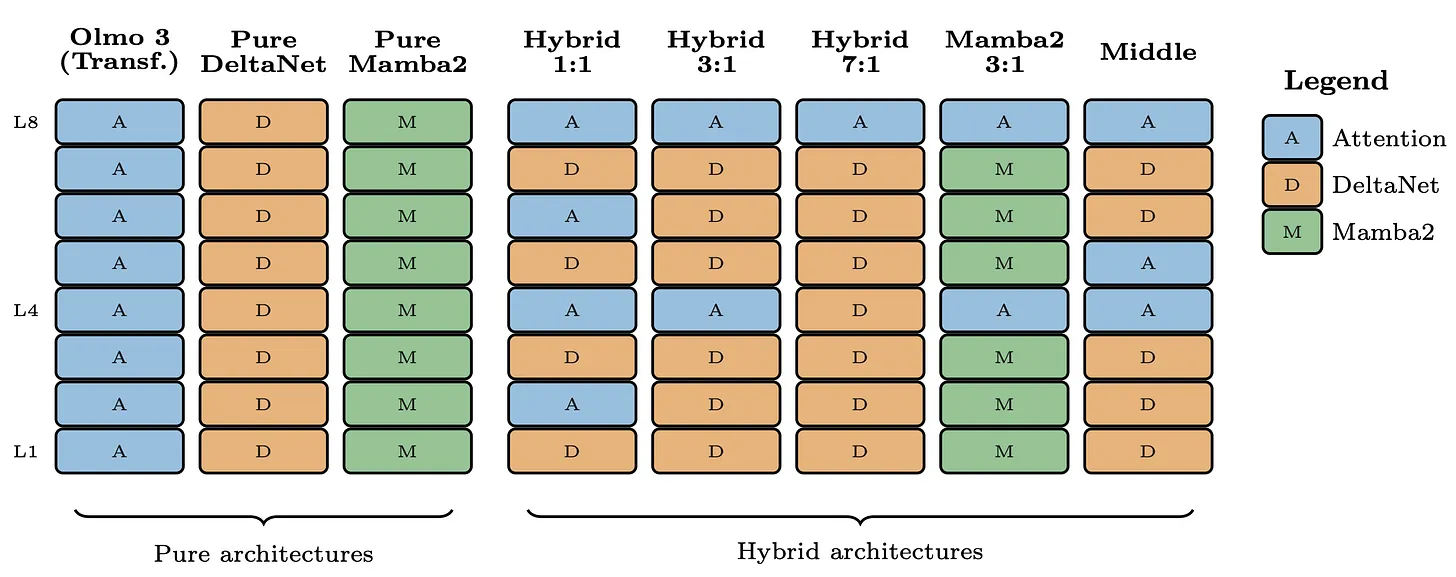

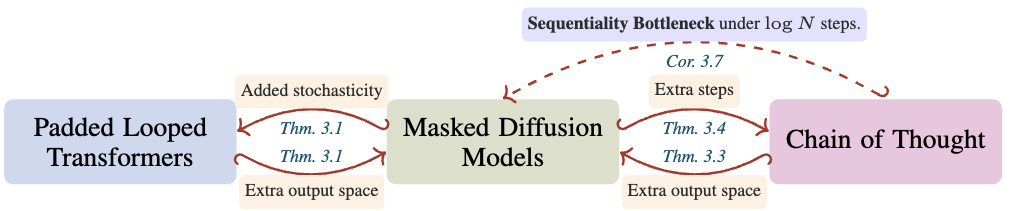

Allen Institute for AI (Ai2), where I worked with Ashish Sabharwal on reasoning and test-time scaling with masked diffusion language models. I was also a core contributor to OLMo Hybrid at Ai2, working on model design and hybrid model scaling laws. In 2026, I am visiting

Noah’s ARK lab at the University of Washington, working with Prof. Noah Smith.

I also co-organize the Formal Languages and Neural Networks (FLaNN) Seminar with Andy Yang.

Research Interests

I’m broadly interested in designing better and more efficient language model architectures, grounded in an understanding of what different architectures can and cannot do.

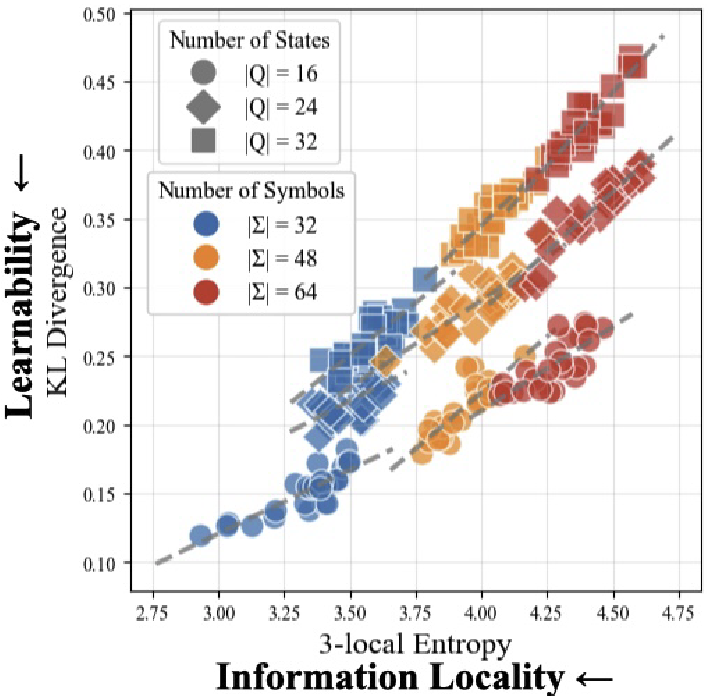

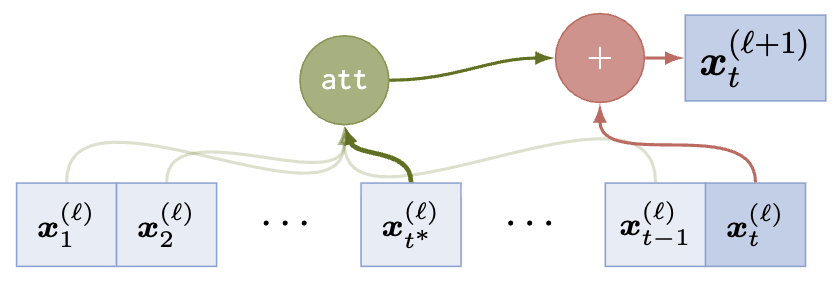

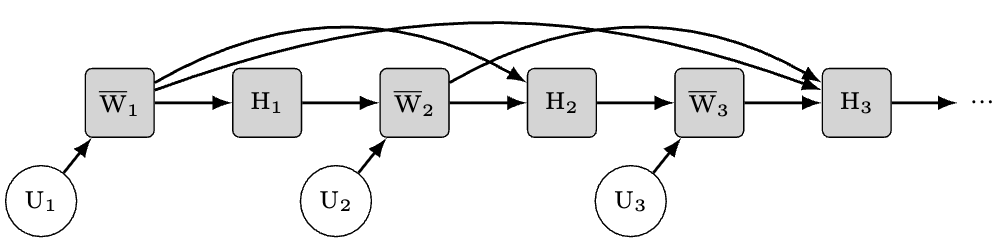

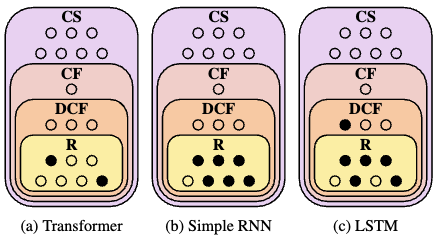

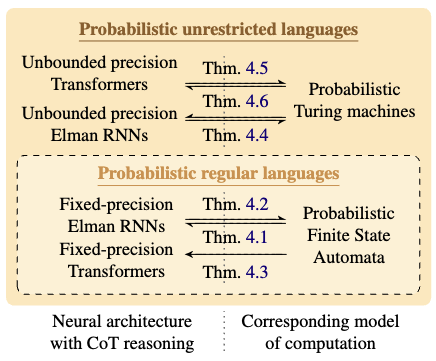

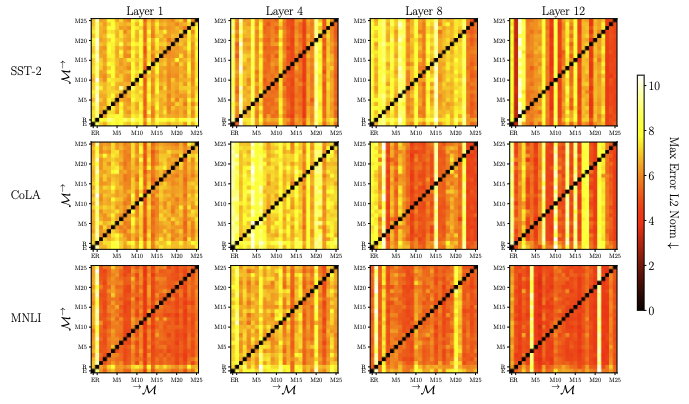

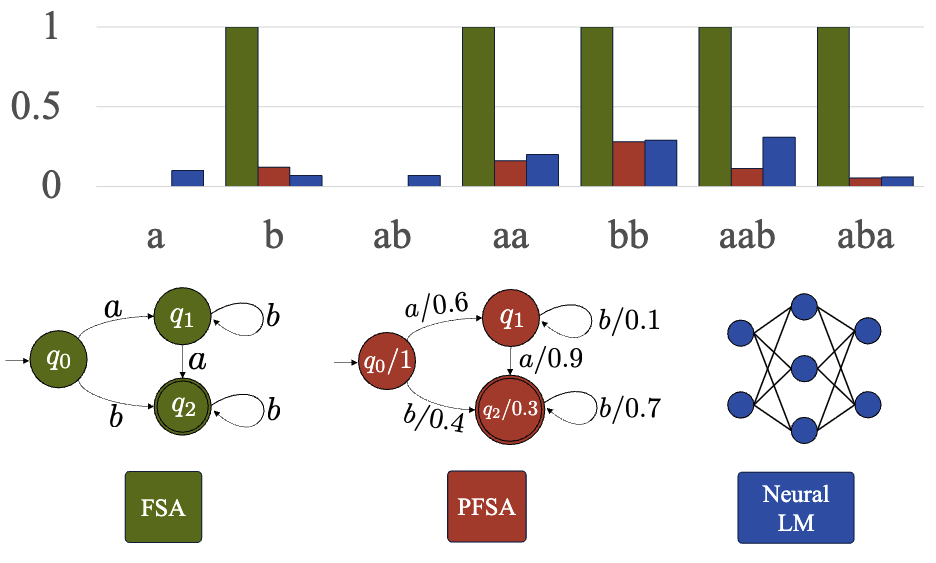

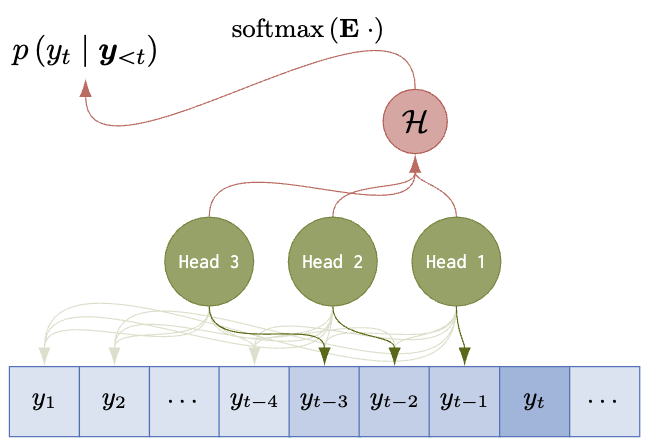

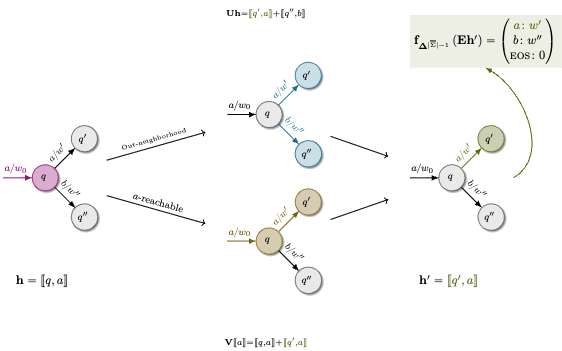

- Expressivity of neural networks: What formal languages can transformers, RNNs, linear RNNs, and hybrid models represent and learn? How does expressivity inform architecture design?

- Reasoning in language models: What happens computationally when models “think step by step”? Can we design more efficient ways for models to think?

- Diffusion models for text and looped transformers: How can we leverage parallel computation to make language models faster and more efficient?

- Expressivity and scaling: How does the expressivity of an architecture shape how it scales? Can we predict which architectures will scale better from what they can represent?

News & Upcoming

Selected Talks

Teaching

I like teaching! Highlights: Head TA for Large Language Models (~600 students, 25+ TAs) and Natural Language Processing (~300 students) at ETH; tutorials at ICML 2025, ACL 2024, and a summer school course at ESSLLI 2023.

Selected Publications

Outside of Research

I like reading, cooking, running, and hiking. I also spend an unreasonable amount of time on aquascaping—the art of designing underwater landscapes. It’s niche, but a lot of fun.

The easiest way is to imagine saying “an a” in American English. Not perfect, but close enough. ↩